Taylor Mezaraups, Graduate Student

My research interests focus primarily on temporal integration, its limiting timescale, and the ways in which it can be altered by music and rhythm.

Temporal integration is the process by which individual, discrete stimuli are bridged together across time to create meaningful and coherent scenes. A good example of this is the feeling of rhythm, and this is where my research began. When we hear a rhythm, we are combining individual beats to create a larger object, or gestalt, that is greater than the sum of its parts. Physically, we hear only individual, discrete notes, yet we bridge them together across time to create an emergent rhythm.

The Feeling of Rhythm

My first study investigated the limiting timescale for rhythm perception. As is apparent in the fact that the metronome, used by musicians to keep time, will not tic slower than 40 beats per minute (bpm; 1.5 seconds between beats), we have a limited capacity to perceive rhythm when the time between beats becomes sufficiently long. You can experience this for yourself by trying to hum a tune at a very slow pace. After a certain amount of time (1.5 to 2 seconds) is placed between each note, the ability to hear the melody goes away, and all we perceive are individual notes. There is a specific interval of time where we go from being able to hear the overall rhythm or melody to just hearing isolated notes, and we are interested in this specific limiting time interval for rhythm perception. We believe that it is not just a time interval but a timescale that varies with body size in the same way that other body time scales do.

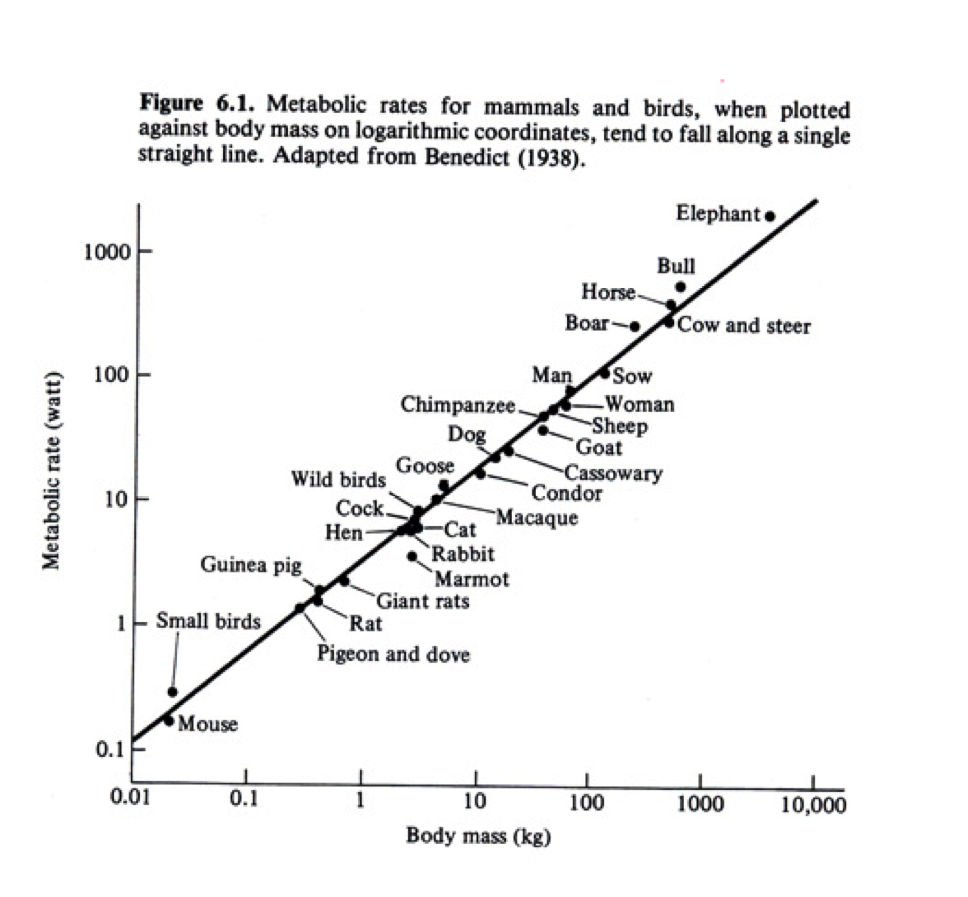

Timescales are pervasive throughout the biological world, and our bodies, like the bodies of all animals, are governed by many timescales (ie. respiration rate, heart rate, lifetime, etc.). All of these timescales depend on the size of an animal’s body, as delineated by Kleiber’s Law, which shows that metabolic rate scales as mass to the ¾ power (Schmidt-Nielsen, 1984; human data suggests mass2/3 [Johnstone et al., 2005]). This means that a larger animal, such as an elephant, has a longer time between breaths, time between heart beats, and time alive than a dog, a cat, or a mouse. Since such scaling laws exist for other body timescales, it would only make sense that our brains too would be governed by similar laws.

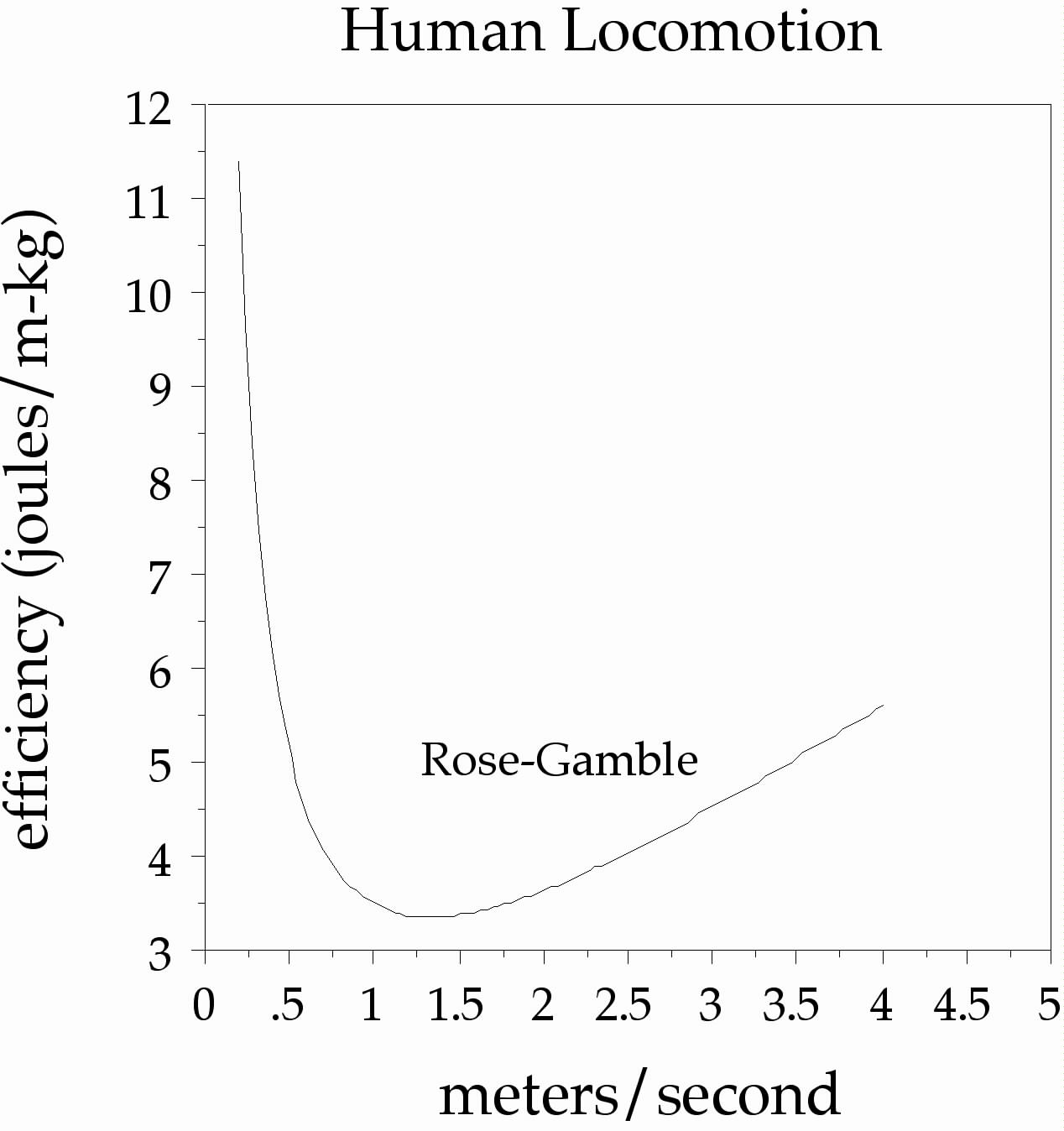

Another important animal timescale is walking speed. Our legs are essentially pendula swinging from our hips, so they are resonant systems with preferred periods. This is made evident by something called the Rose-Gamble Law, which delineates a most efficient walking speed of about 1.5 m/sec (Rose & Gamble, 1994). The exact period can be derived by the formula, t = 2π √(length/gravity), which suggests that the timescale might be related to height by a power of ½.

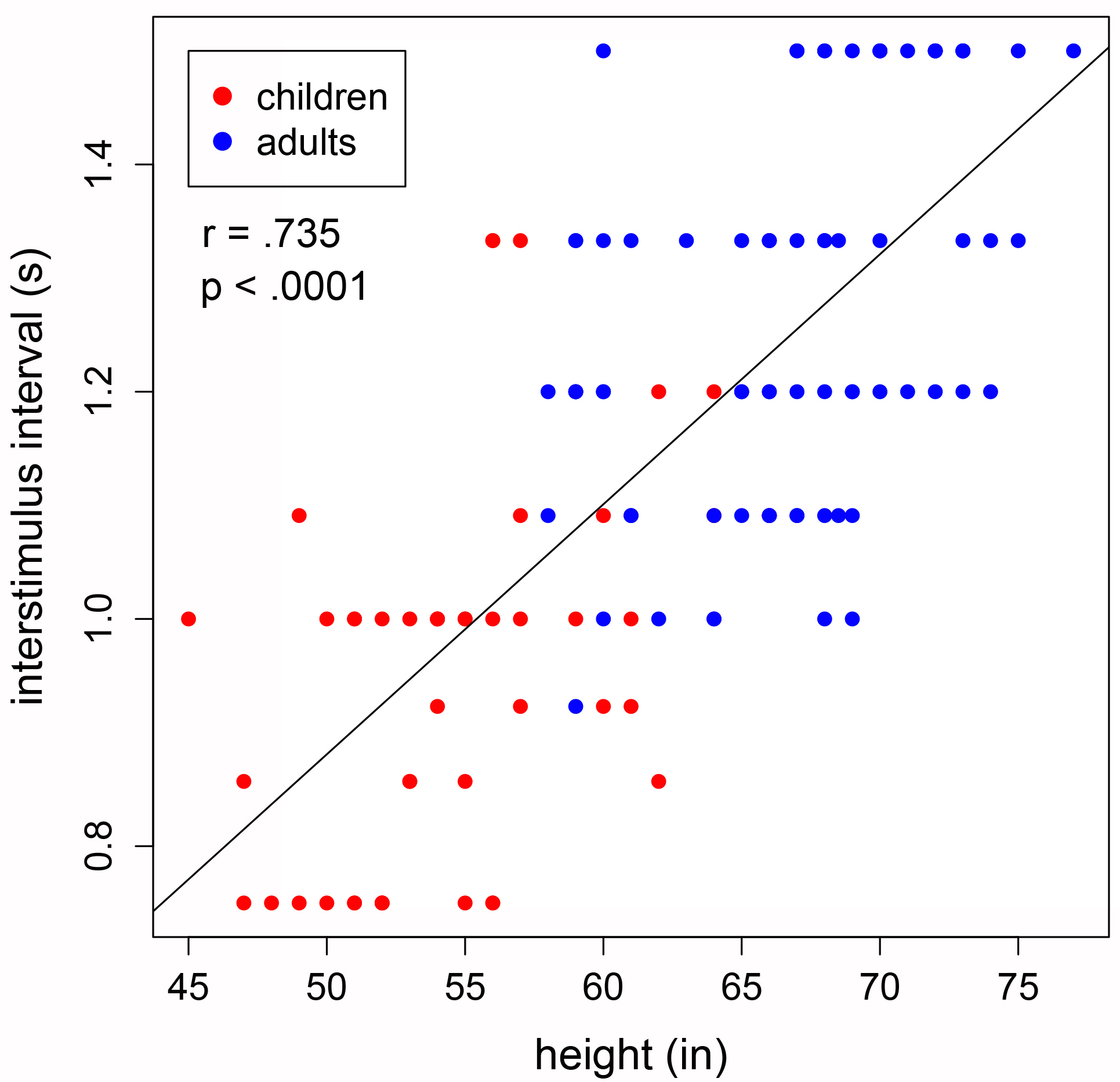

We looked into the relationship between height and cognitive timescale using rhythm studies. We used an electronic drum to measure drumming ability at various speeds (tempi). We measured the slowest tempo at which each person could drum accurately (t-horizon) and found that it was related to height by a power of 1.1 (r=0.74, p<0.0001), suggesting that the scaling law is based on metabolic rate (BMR~Mass2/3; T~1/(BMR/Mass); T~1/(Mass2/3/Mass); T~1/Mass1/3; T~1/height3(1/3); T~1/height1). Taller subjects, with presumably slower basal metabolic rates, were able to drum accurately at slower speeds than short people could.

Semantic Priming

We expanded on the above findings with a semantic priming paradigm. In the same way that individual beats are bridged together to create the feeling of rhythm, the meanings of words are bridged together to create coherent understanding in language. Words become sentences because the ideas and meanings behind words become integrated across time. This capacity is also limited temporally, as can be demonstrated by trying to understand a sentence when a few seconds are placed between words; it loses its overall meaning, and the words become islands, isolated from the others. This limiting timescale of semantic relationships can be investigated using semantic priming.

The basis of semantic priming was explained by Meyer and Shvaneveldt (1972), using a spreading excitation model, also referred to as spreading activation theory. The basic idea is that, when a word is processed, activation spreads to other similar or related words, facilitating their retrieval (Collins & Loftus, 1975). For example, when a person sees or hears the word “bread,” other related words like “butter” and “loaf” become activated, such that they are subsequently processed faster when presented afterwards. This can be referred to a “priming,” in that processing of an initial word “primes” someone to respond to a following associated word. This spreading activation from one word to another decays over time and is thus also sensitive to the limiting timescale for temporal integration. We aimed to investigate these limitations in the context of height in our semantic priming study.

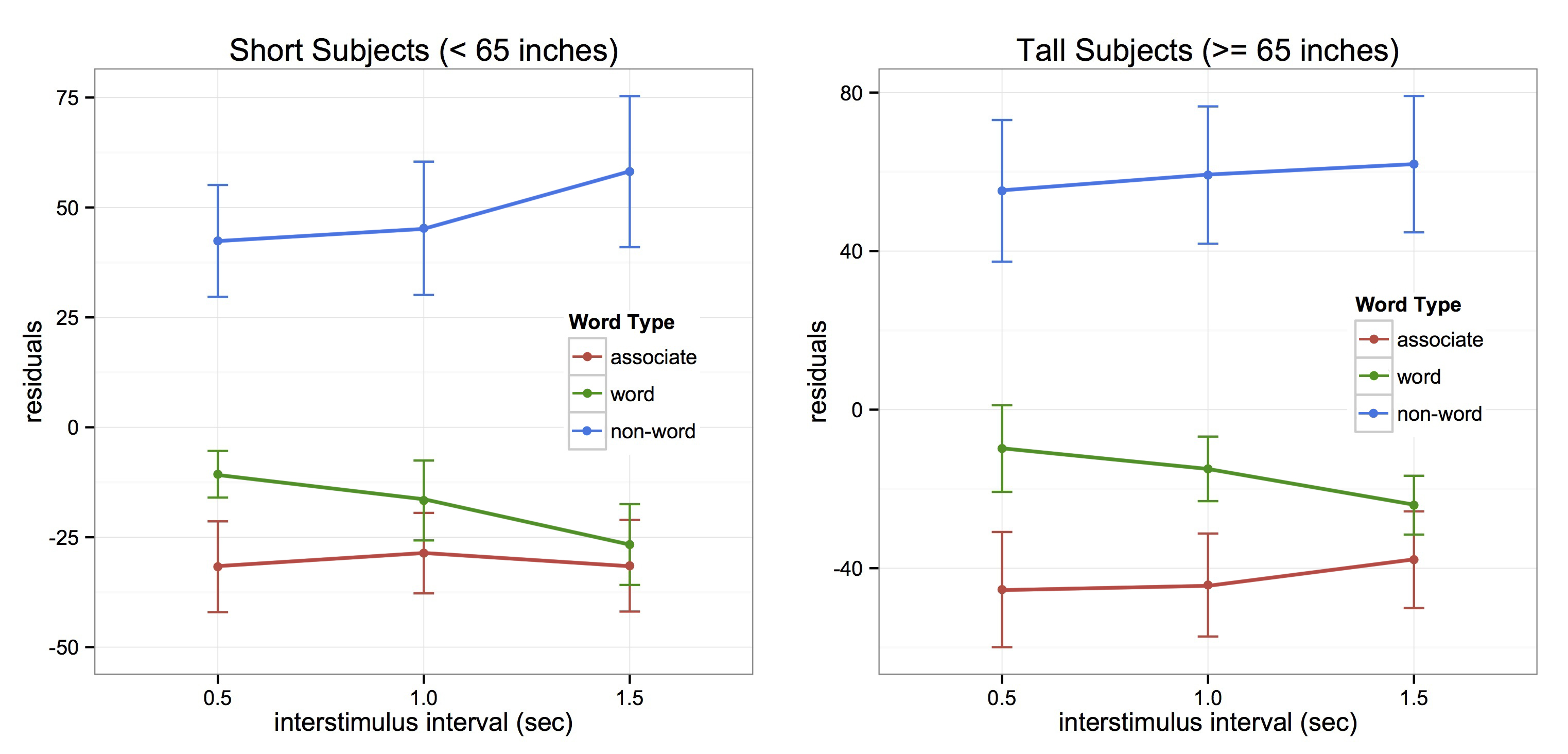

We presented individual words on a screen that were paired, such that one word could be followed by an associated (ie. bread-butter), non-associated (ie. bread-nurse), or non-word (ie. nurse-plune). Subjects had to respond as to whether or not each word was a word or a non-word, but we were solely interested in differences in reaction times to associated versus non-associated word pairs. Priming effects are measured by the differences between these reaction times, with faster responses expected to associated words when semantic priming occurs. Our paradigm also involved three specific temporal delays of 500, 1000, and 1500 milliseconds (ms) between words. Results showed that tall participants (5’5″ and taller) were primed at 500 and 1000 ms, but short subjects (shorter than 5’5″) were only primed at 500 ms. This is evidence that shorter people are limited by a shorter interval of time, or that taller subjects are able to bridge events across a longer span of time.

Semantic Priming and Music

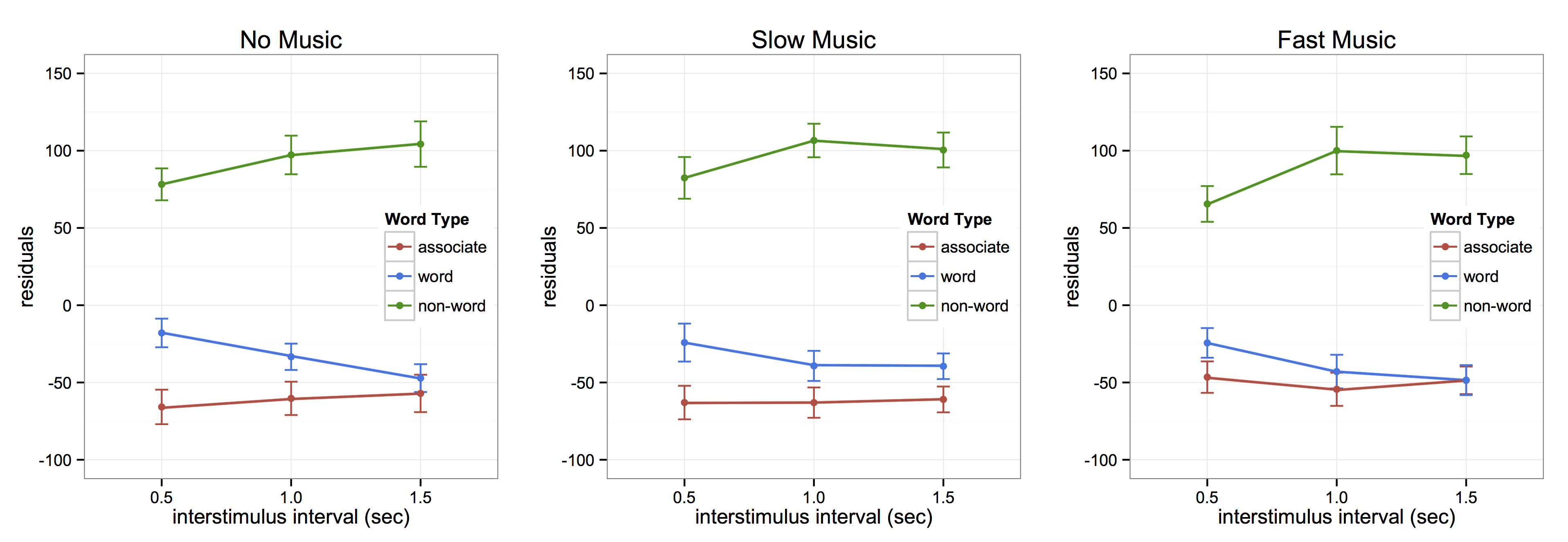

Due to the results of both our drumming study and semantic priming study, we were interested in whether we could alter semantic priming effects using music or rhythm. We expanded on our semantic priming paradigm to include conditions of fast (137 bpm) and slow (50 bpm) music, such that subjects completed the task at three music conditions, no, slow, and fast music. The no music condition replicated the combined results of our initial study, with priming at 500 and 1000 ms, but the music conditions altered the results in different ways. The slow music condition expanded the priming effect to 1500 ms, and the fast music condition collapsed the priming effect, such that it only occurred at 500 ms. These results suggest that music might serve as a tool by which to alter the limiting timescale for temporal integration.

Time-to-Contact

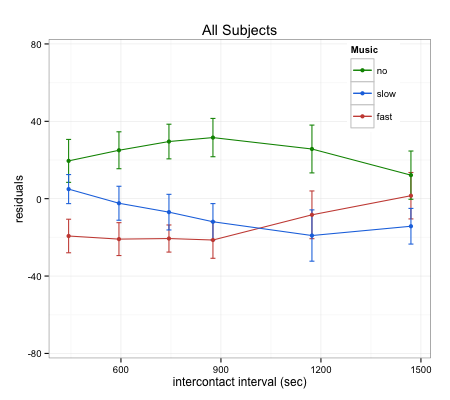

We are currently interested in the mechanism by which music is able to alter temporal integration. It is possible that music modulates the allocation of attentional resources (Large & Jones, 1999) or entrains motor responses to occur on specific beats (Janata, Tomic, & Haberman, 2012; Repp, 2005; Chen, Penhune, & Zatorre, 2008). To further investigate these ideas, we have conducted a time-to-contact study, whereby subjects provide responses indicating their ability to imagine motion. In the study, a ball moves across the screen and goes behind a box. The ball never emerges, but the task is to provide a response when it should be coming out. This gives us the ability to assess perceived motion in the absence of a stimulus, providing a metric of timing. The task was completed using the same slow and fast music as the semantic priming study, in addition to a no music condition. Results showed that the two different speeds of music had markedly similar effects on response times; both slow and fast music generated faster, and more accurate, estimates of the ball’s emergence time. It is possible that music is able to provide a template, facilitating the estimation of imagined motion, but this result further complicates our findings in the semantic priming study. It is clear that music has an impact on temporal integration, but further research is necessary to elucidate its mechanisms. This is the focus of my current and future projects in the Gilden Lab.

References

Chen, J. L., Penhune, V. B., & Zatorre, R. J. (2008). Listening to musical rhythms recruits motor regions of the brain. Cerebral cortex, 18(12), 2844-2854.

Collins, A. M., & Loftus, E. F. (1975). A Spreading-Activation Theory of Semantic Processing. Psychological Review, 82(6), 407-428.

Janata, P., Tomic, S. T., & Haberman, J. M. (2012). Sensorimotor coupling in music and the psychology of the groove. Journal of Experimental Psychology: General, 141(1), 54.

Johnstone, A. M., Murison, S. D., Duncan, J. S., Rance, K. A., & Speakman, J. R. (2005). Factors influencing variation in basal metabolic rate include fat-free mass, fat mass, age, and circulating thyroxine but not sex, circulating leptin, or triiodothyronine. The American journal of clinical nutrition, 82(5), 941-948.

Large, E. W., & Jones, M. R. (1999). The dynamics of attending: How people track time-varying events. Psychological review, 106(1), 119.

Meyer, D. E., Schvaneveldt, R. W., & Ruddy, M. G. (1972, November). Activation of lexical memory. In meeting of the Psychonomic Society, St. Louis, Missouri.

Repp, B. H. (2005). Sensorimotor synchronization: A review of the tapping literature. Psychonomic bulletin & review, 12(6), 969-992.

Rose, J., Ralston, H., & Gamble, J. (1994). Energetics of walking. Human Walking, 45-72.

Schmidt-Nielsen, K. (1984). Scaling: why is animal size so important?. Cambridge University Press.